AI in contract management: how to avoid data exposure and compliance risks

AI is transforming contract management for legal, procurement, and compliance teams through faster review cycles, automated risk detection, and continuous compliance checks. These efficiencies can significantly reduce manual workload and improve visibility across large contract portfolios.

However, AI adoption in contract workflows also introduces data exposure and regulatory risks, particularly for organizations operating in regulated sectors. In the EU, these concerns are reinforced by the upcoming EU AI Act, which will require strict oversight for high-risk AI systems such as those used in legal and compliance decision-making (effective 2 August, 2026).

A key architectural decision for organizations adopting AI in contract lifecycle management (CLM) involves how and where the AI is deployed. Understanding where AI systems run, how they process data, and what safeguards exist is critical for CLM buyers and CIOs.

This article will cover:

- Key risks when using AI in contract management for regulated sectors

- Why public AI tools bring unique exposure and compliance concerns

- Mitigation strategies, from vendor selection to technical controls

- How to build a practical governance and implementation roadmap

Primary risks in highly regulated industries

When adopting AI in contract management, one of the most important considerations is how the AI system is deployed and where contract data is processed.

In practice, organizations often choose between two main approaches:

- Highly capable proprietary AI models running on external cloud infrastructure (often hosted outside the EU). These systems may offer strong performance and convenience but can raise concerns around data residency, transparency, and governance.

- Private or self-hosted AI models, including open-source LLMs deployed on protected in-house infrastructure or trusted European cloud environments. These approaches provide stronger control over sensitive contract data but may require additional operational effort or different performance trade-offs.

For regulated industries, this architectural choice can significantly influence compliance posture, risk exposure, and long-term governance capabilities.

Organizations such as EU banks, insurers, healthcare providers, and life sciences companies must already comply with regulations including GDPR, NIS2, DORA, PSD2, and EHDS, alongside sector-specific frameworks such as CRD IV for financial institutions. Introducing AI into contract management can increase the potential exposure of sensitive information across the entire contract lifecycle, from document intake to long-term archival.

As a result, organizations must carefully evaluate the risks associated with AI-powered contract workflows. The most significant risks typically fall into four categories.

1. Data exposure through cloud or third-party AI services

Sensitive contract information is frequently entered into AI tools for analysis, summarization, or clause extraction. If these tools run on external infrastructure, contract data may be transmitted to or processed on servers outside EU jurisdiction.

In some public AI systems, inputs may also be retained in logs or used to improve models unless explicit safeguards are in place.

Real-world incidents highlight the consequences. In 2023, employees at Samsung inadvertently uploaded confidential source code into ChatGPT, demonstrating how easily sensitive corporate information can be exposed through generative AI tools.

For organizations handling regulated data, this loss of control over sensitive contractual information represents a serious governance risk.

2. Shadow AI and unauthorized use

Even when companies establish internal AI policies, employees often experiment with public AI tools independently.

A Cybernews survey from August 2025 (1,003 U.S. employees) found that 59% use unapproved AI tools at work, and 75% share sensitive information, including internal documents and legal data.

This type of uncontrolled AI usage, often called shadow AI, can:

- Breach confidentiality clauses

- Circumvent security policies

- Create unmonitored data flows to external systems

- Bypass internal audit and compliance processes

For regulated industries, shadow AI introduces compliance risks that are difficult to detect or monitor.

3. Poor data retention and monitoring practices

AI systems often generate additional data artifacts such as logs, analytics outputs, or intermediate processing results.

Without careful governance, these artifacts can become hidden repositories of sensitive information that fall outside normal contract management retention policies.

This can create several problems:

- Indefinite retention of sensitive data

- Poorly controlled internal access

- Difficulty enforcing deletion requests

- Conflicts with GDPR principles (e.g. data minimization and purpose limitation)

Organizations must therefore treat AI-generated data with the same rigor applied to contract records themselves.

4. LLM trustworthiness: bias, errors, and accountability

Another critical concern is the trustworthiness of large language models (LLMs) used in contract analysis.

Recent research highlights that LLM trustworthiness is multi-dimensional, typically spanning areas such as accuracy, reliability, robustness, fairness, transparency, privacy protection, safety, and accountability. These dimensions are increasingly used as a framework for evaluating whether AI systems can be safely deployed in sensitive domains such as legal analysis and regulated industries (see the overview and full research paper).

Key questions organizations must address include:

- How reliable are AI outputs for legal interpretation?

- Who is responsible when AI recommendations are wrong?

- How transparent is the model about uncertainty or confidence levels?

Several mitigation approaches are commonly used:

- Human-in-the-loop review for legally significant outputs

- Confidence scores or explanation layers for AI results

- Clear governance defining when AI assistance is acceptable and when expert review is required

AI-powered search and summarization tools also process historical contract archives, meaning trustworthiness concerns extend beyond new contracts to existing document repositories.

Mitigation strategies for legal leaders

Organizations can manage AI risks in contract management through a layered governance and technical approach.

Vendor and policy controls

Careful vendor selection and clear internal policies form the foundation of safe AI adoption.

Key controls include:

- Ensuring customer data is never used for model training without explicit consent

- Requiring EU-based data processing and clear data residency guarantees

- Verifying strict retention policies and comprehensive audit logging

- Classifying contracts by sensitivity and restricting public AI tools for personal, financial, or health-related data

- Requiring human review for AI outputs that influence legal or financial decisions

It is also worth noting that some organizations may choose to fine-tune open-source models on their own contract data, provided the model is deployed and maintained in secure internal infrastructure. In controlled environments, this can improve accuracy while preserving full data ownership.

Technical and operational steps

Beyond policy controls, organizations should adopt technical measures that reduce operational risk.

Examples include:

- Deploying private or self-hosted AI models running on protected infrastructure or trusted EU cloud providers.

- Monitoring internal AI usage and maintaining clear logs of AI-assisted contract actions

- Limiting automation to lower-risk tasks, such as identifying key metadata or obligation reminders, while requiring review mechanisms for legally significant clauses.

These safeguards ensure AI acts as a decision-support tool rather than a fully autonomous legal actor.

Implementation roadmap

Organizations adopting AI in contract management should take a phased approach:

-

Assess current exposure

Identify where employees already use AI tools; and map data flows involving contract data. -

Pilot controlled use cases

Start with anonymized or lower-risk contract datasets. -

Select compliant platforms

Prioritize CLM vendors offering private AI, EU infrastructure, and strong compliance features. -

Train internal teams

Educate employees on risks associated with public AI tools and best practices for anonymization. -

Continuously monitor and adapt

Review AI logs, update policies, and prepare for evolving regulatory frameworks.

With EU AI Act enforcement approaching in 2026, organizations that prioritize private AI deployments and strong governance frameworks will be better positioned to innovate safely.

🔑 Key takeaways

- AI in contract management brings efficiency but raises complex data exposure and compliance issues for regulated industries.

- Frameworks like the EU AI Act, GDPR, and sector regulations mean AI adoption in contract management must be carefully governed

- Shadow AI, i.e. employees using unapproved tools, can bypass internal controls and increase the risk of confidential data leaks.

- Effective AI risk management combines strict vendor vetting, tailored governance, and technical controls.

- CLM buyers should prioritize platforms offering private AI models, EU-hosted infrastructure, and robust audit capabilities.

FAQs

AI can accelerate contract review, identify risky clauses, summarize agreements, and automate monitoring tasks, allowing legal teams to handle larger contract volumes more efficiently.

Public AI tools may store or process uploaded contract data on external servers, sometimes outside EU jurisdictions, and in some cases may retain or reuse data for training, creating confidentiality and compliance risks.

Highly regulated sectors such as banking, insurance, healthcare, and life sciences face the greatest risks because they must comply with strict regulations including GDPR, NIS2, DORA, and sector-specific frameworks.

Organizations can reduce AI-related risks by choosing AI solutions with EU-based or private infrastructure, ensuring customer data is not used for training without consent, restricting the use of public AI tools for sensitive contracts, and maintaining human review for legally significant decisions. Clear internal policies, monitoring of AI usage, and systems that provide transparency into AI outputs also help manage risk.

Starting August 2, 2026, the EU AI Act will impose stricter controls on high-risk AI systems, including those used for document analysis and decision support, requiring stronger governance, transparency, and compliance measures.

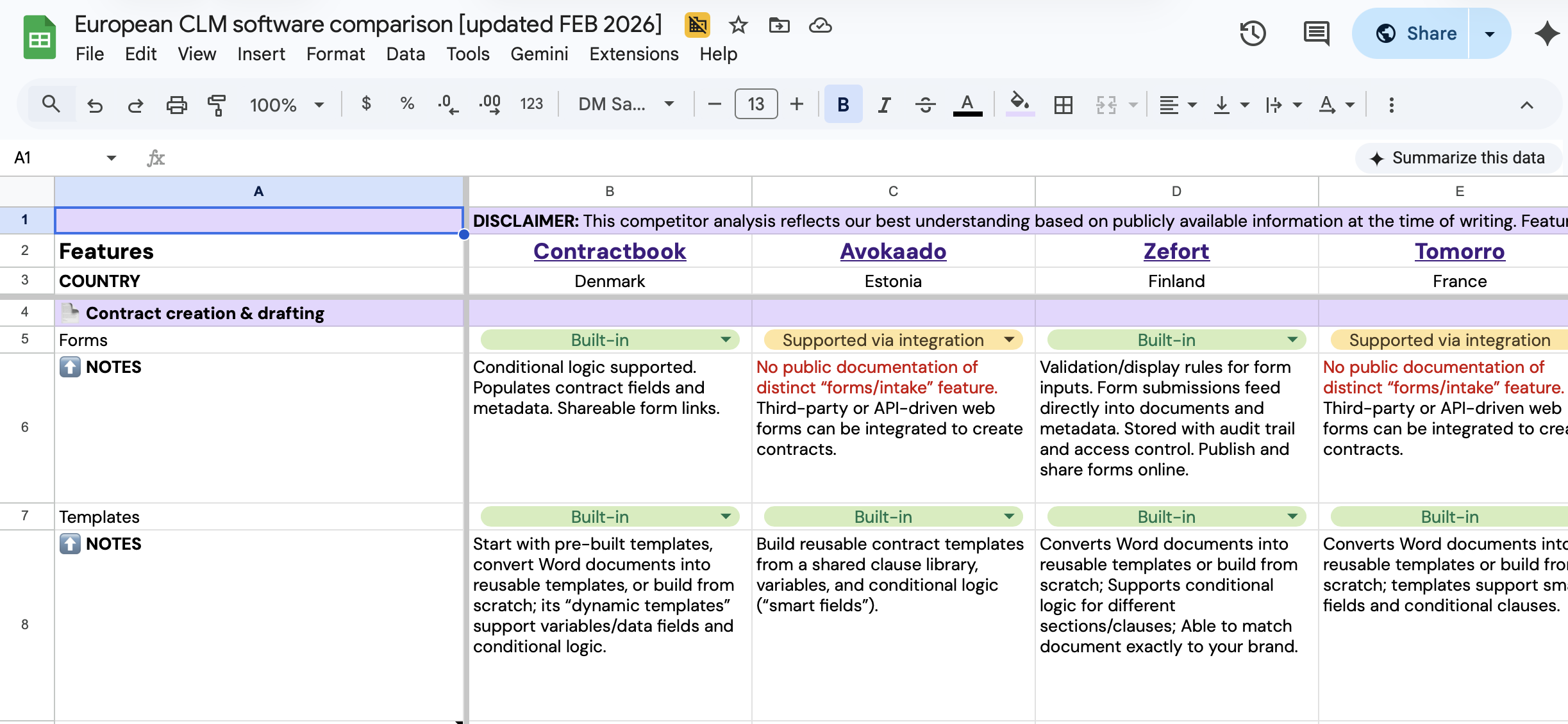

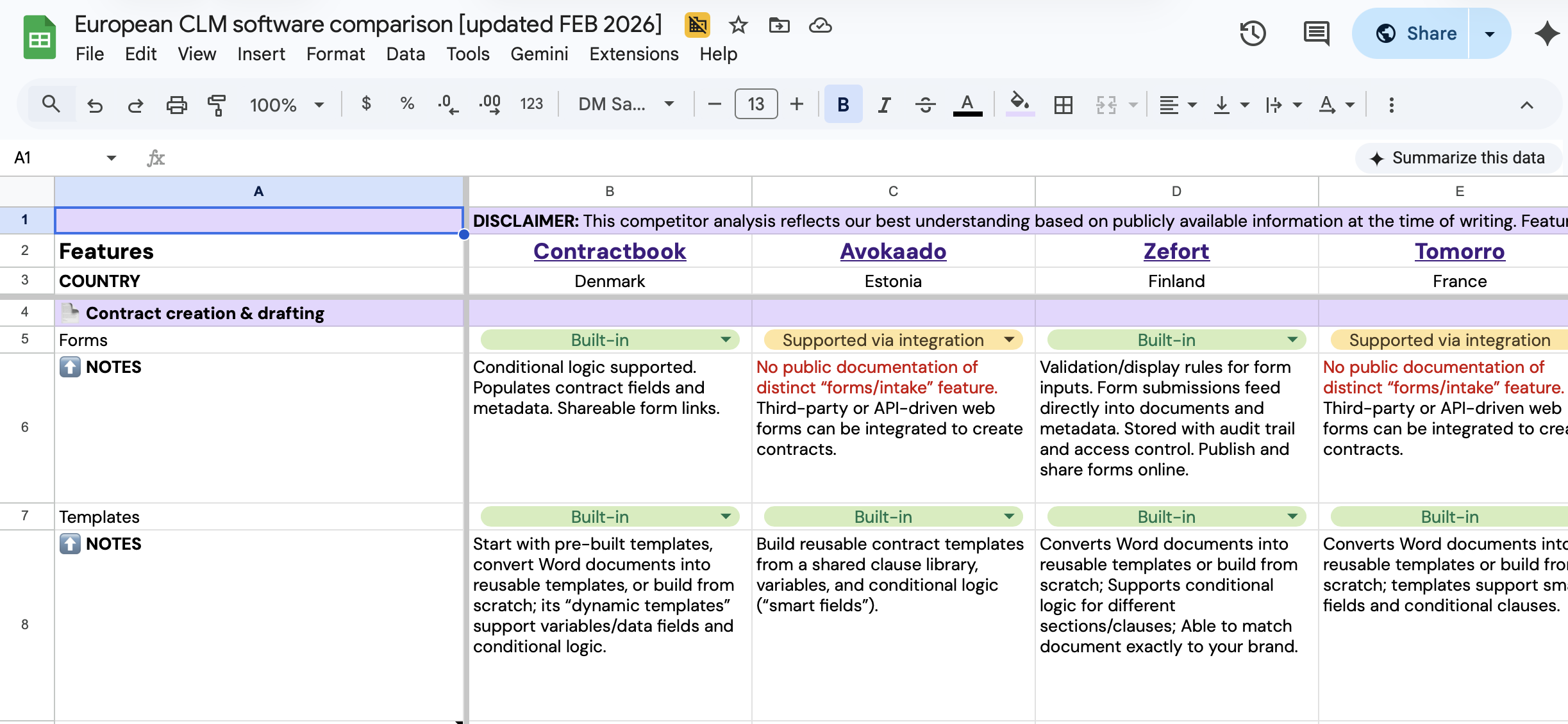

Compare European CLM leaders

Get a comprehensive breakdown of the top CLM solutions in one spreadsheet.

Compare European CLM leaders

Get a comprehensive breakdown of the top CLM solutions in one spreadsheet.