AI prompting for contracts: How to get accurate, usable results

AI can speed up contract work, but poor prompting leads to unreliable results. In contract management, that creates real risk. You need outputs you can trust, verify, and use.

This guide explains how to write structured AI prompts that produce accurate, usable contract data in real workflows.

What you’ll learn:

- Why most AI prompts fail in contract management

- How to structure prompts for consistent results

- How to control AI output and avoid hallucinations

- How to apply prompting in real contract workflows

- How to improve prompts over time

Why most AI prompts fail in contract management

Most AI prompts fail because they are too vague. Common issues include:

- No defined role or perspective

- No clear source limitations

- No structured output format

- No instructions for missing data

The result is predictable:

- Inconsistent answers

- Missing or incomplete information

- Outputs you cannot verify

In contract management, this is not just inefficient. It introduces compliance and operational risk. If you want usable contract data, you need structured prompts.

Common AI use cases in contract management

AI supports several contract management tasks. Most of them depend on how well the prompt is written.

Typical use cases include:

- Summarizing contracts to extract key terms

- Metadata extraction, such as payment terms, renewal dates, and liabilities

- Risk identification based on internal policies

- Deviation analysis between contract versions

- Ad hoc search to quickly find specific information

Each of these requires clear instructions. Without them, results vary too much to rely on.

How to structure AI prompts for contract management

A good prompt is not a question but a structured instruction. A practical framework includes five parts.

1. Define role and goal

Start with the role and the objective. This sets the AI’s perspective. It determines what the model prioritizes and how it responds.

Example:

- Role: Contract coordinator

- Goal: Extract key commercial terms and highlight deviations

A contract coordinator focuses on structured data. It does not interpret the contract.

If you switch the role to a legal expert, the output changes. The AI starts evaluating and interpreting the content. This small change has a big impact.

2. Define scope and source

Tell the AI exactly what it should work with. In contract management, this usually means:

- Use only the contract text

- Include appendices if relevant

- Do not use external knowledge

This reduces variability and prevents assumptions.

You should also require evidence:

- Direct quotes

- Clause or section references

This makes the output verifiable and suitable for compliance-related work.

You can also go one step further and require the AI to highlight the exact source in the contract. Some contract management tools already do this by default, linking answers directly to the relevant clause. This makes it easier to verify results and reduces the risk of misinterpretation.

Learn more about AI and automation in contract management: What is contract automation? Benefits, features, and best-practices.

3. Define the output format

Specify how the answer should be structured.

Examples:

- A table with predefined columns

- A single numeric value

- A structured format for external systems

Also define what happens if data is missing. A clear instruction like “If not found, return ‘Not found’” prevents unreliable guesses.

4. Define decision requirements

Clarify when the task is complete. This is important for complex contract analysis.

Example:

- Each topic must have a value or “Not found”

- Each result must include a quote and reference

This ensures consistency across large contract datasets.

5. Provide examples

Examples improve output quality. Instead of describing the format, show it.

An example defines:

- What a summary should include

- How quotes are presented

- How results are structured

This approach, often called few-shot prompting, reduces ambiguity and improves consistency.

Why language and clarity matter

Language directly affects output quality. A simple rule: Use the same language as the contract.

If the contract is in German, use a German prompt. This reduces interpretation errors.

In multilingual contracts, you can:

- Provide keyword hints in multiple languages

- Instruct the AI to follow the language of each section

Clear instructions reduce variability. Less variability means more reliable outputs.

Practical examples of AI prompting in contract workflows

Structured prompts enable repeatable workflows. One common use case is metadata extraction.

For example, extracting payment terms:

- The AI reads the contract

- Identifies the relevant clause

- Returns the value in a structured format

This allows you to:

- Process large contract volumes

- Standardize contract data

- Build reports and track obligations

Another use case is policy monitoring. Instead of extracting data, the AI evaluates contracts against rules.

Example: Payment terms must be at least 45 days. The AI classifies contracts as:

- Compliant

- Not compliant

- Unclear

This shifts the focus from data extraction to decision support.

Iterating prompts improves results over time

Prompting is not a one-time setup. The process is iterative:

- Draft the prompt

- Test it on real contracts

- Review the output

- Refine the instructions

You can also ask the AI to evaluate the prompt and identify gaps. Over time, this leads to:

- More consistent outputs

- Fewer errors

- More efficient workflows

Well-structured prompts become reusable building blocks for contract management processes.

🔑 Key takeaways

- Most AI prompts fail because they are too vague, leading to unreliable outputs.

- A strong prompt includes role, scope, source, output format, requirements, and examples.

- Clear boundaries prevent hallucinations and improve reliability.

- Structured prompting enables repeatable, scalable contract workflows.

- Iterating prompts over time leads to better accuracy and consistency.

We recently hosted a webinar on contract prompting. If you want to watch it on-demand, you can do that here.

FAQs

An AI prompt is a structured instruction that tells an AI system how to analyze a contract. Instead of asking a general question, a prompt defines:

- The task

- The scope

- The expected output

In contract management, prompts are used to extract data, identify risks, and analyze agreements consistently.

Most prompts fail because they are too vague. Common issues include:

- No defined role or perspective

- No clear source limitations

- No structured output format

This leads to inconsistent and unreliable results. Clear, structured prompts reduce these problems and improve accuracy.

You can reduce hallucinations by setting clear boundaries in the prompt. Key steps include:

- Restricting the source to the contract text only

- Requiring direct quotes and clause references

- Defining what to do if information is missing

For example, instruct the AI to return “Not found” instead of guessing.

Yes. Using the same language as the contract improves accuracy. It reduces interpretation errors and helps the AI understand the context more precisely.

In multilingual contracts, you can guide the AI by listing key terms in multiple languages or instructing it to follow the language of each section.

The role defines how the AI approaches the task. A contract coordinator extracts structured data without interpretation. A legal expert analyzes and evaluates the contract. Choosing the right role ensures the output matches the intended use case.

Yes. Well-structured prompts can be reused across multiple contracts and workflows This makes processes:

- More consistent

- Easier to scale

- Less dependent on individual users

Over time, prompts become standardized assets in contract management.

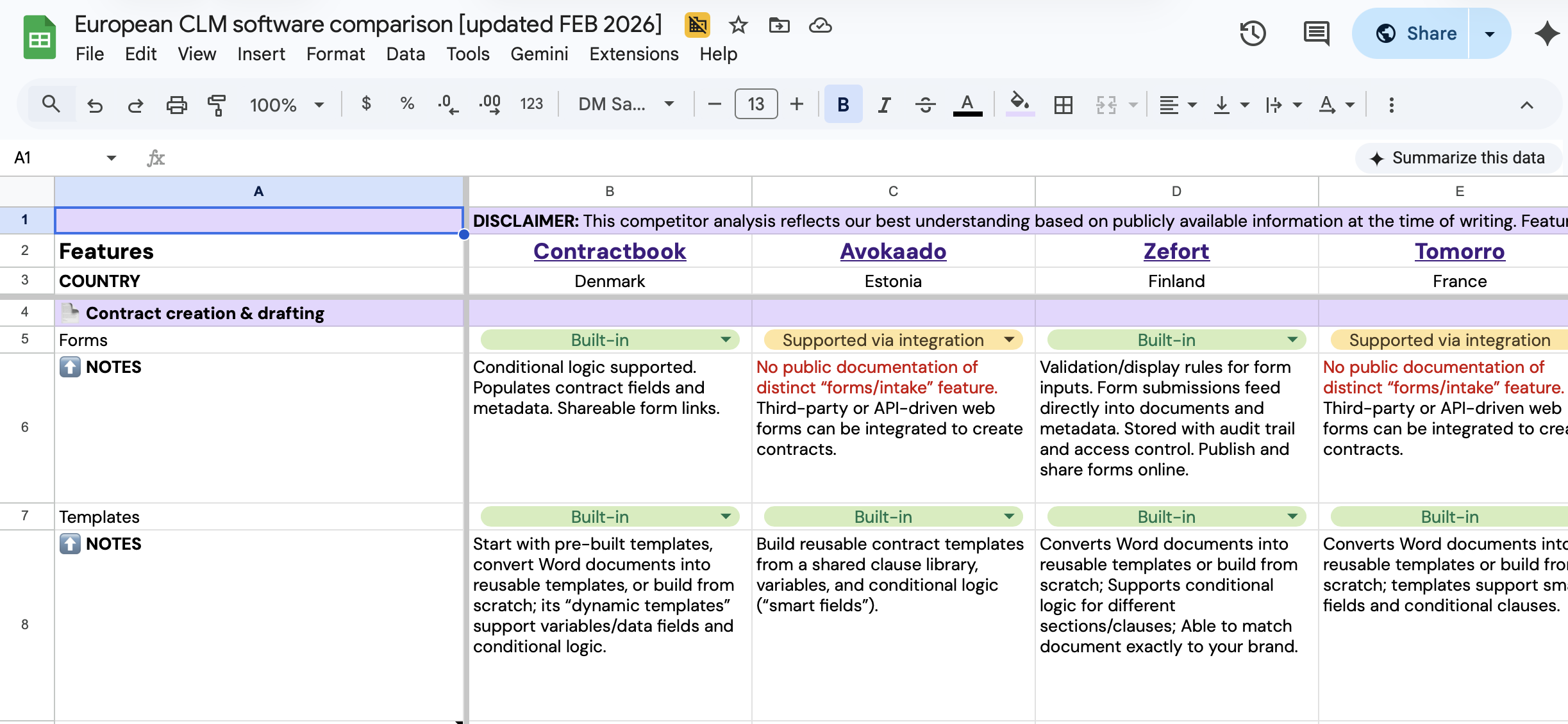

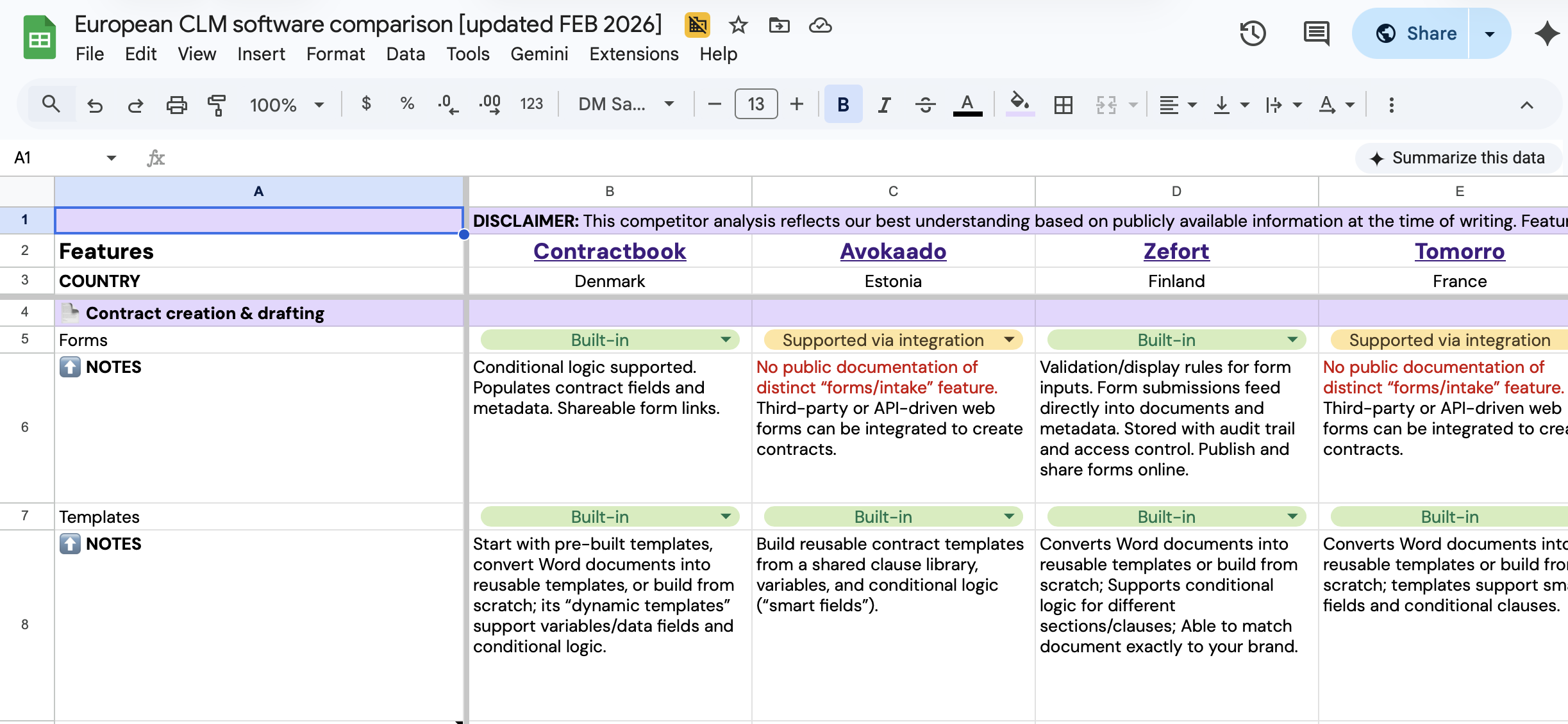

Compare European CLM leaders

Get a comprehensive breakdown of the top CLM solutions in one spreadsheet.

Compare European CLM leaders

Get a comprehensive breakdown of the top CLM solutions in one spreadsheet.